I know I write a lot about my disillusionment with modern psychiatry. I have lamented the overriding psychopharmacological imperative, the emphasis on rapid diagnosis and medication management, at the expense of understanding the whole patient and developing truly “personalized” treatments. But at the risk of sounding like even more of a heretic, I’ve noticed that not only do psychopharmacologists really believe in what they’re doing, but they often believe it even in the face of evidence to the contrary.

I know I write a lot about my disillusionment with modern psychiatry. I have lamented the overriding psychopharmacological imperative, the emphasis on rapid diagnosis and medication management, at the expense of understanding the whole patient and developing truly “personalized” treatments. But at the risk of sounding like even more of a heretic, I’ve noticed that not only do psychopharmacologists really believe in what they’re doing, but they often believe it even in the face of evidence to the contrary.

It all makes me wonder whether we’re practicing a sort of pseudoscience.

For those of you unfamiliar with the term, check out Wikipedia, which defines “pseudoscience” as: “a claim, belief, or practice which is presented as scientific, but which does not adhere to a valid scientific method, lacks supporting evidence or plausibility, cannot be reliably tested…. [is] often characterized by the use of vague, exaggerated or unprovable claims [and] an over-reliance on confirmation rather than rigorous attempts at refutation…”

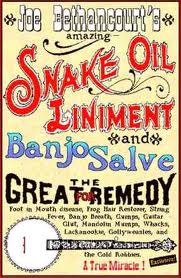

Among the medical-scientific community (of which I am a part, by virtue of my training), the label of “pseudoscience” is often reserved for practices like acupuncture, naturopathy, and chiropractic. Each may have its own adherents, its own scientific language or approach, and even its own curative power, but taken as a whole, their claims are frequently “vague or exaggerated,” and they fail to generate hypotheses which can then be proven or (even better) refuted in an attempt to refine disease models.

Among the medical-scientific community (of which I am a part, by virtue of my training), the label of “pseudoscience” is often reserved for practices like acupuncture, naturopathy, and chiropractic. Each may have its own adherents, its own scientific language or approach, and even its own curative power, but taken as a whole, their claims are frequently “vague or exaggerated,” and they fail to generate hypotheses which can then be proven or (even better) refuted in an attempt to refine disease models.

Does clinical psychopharmacology fit in the same category?

Before going further, I should emphasize I’m referring to clinical psychopharmacology: namely, the practice of prescribing medications (or combinations thereof) to actual patients, in an attempt to treat illness. I’m not referring to the type of psychopharmacology practiced in research laboratories or even in clinical research settings, where there is an accepted scientific method, and an attempt to test hypotheses (even though some premises, like DSM diagnoses or biological mechanisms, may be erroneous) according to established scientific principles.

The scientific method consists of: (1) observing a phenomenon; (2) developing a hypothesis; (3) making a prediction based on that hypothesis; (4) collecting data to attempt to refute that hypothesis; and (5) determining whether the hypothesis is supported or not, based on the data collected.

In psychiatry, we are not very good at this. Sure, we may ask questions and listen to our patients’ answers (“observation”), come up with a diagnosis (a “hypothesis”) and a treatment plan (a “prediction”), and evaluate our patients’ response to medications (“data collection”). But is this only a charade?

First of all, the diagnoses we give are not based on a valid understanding of disease. As the current controversy over DSM-5 demonstrates, even experts find it hard to agree on what they’re describing. Maybe if we viewed DSM diagnoses as “suggestions” or “prototypes” rather than concrete diagnoses, we’d be better off. But clinical psychopharmacology does the exact opposite: it puts far too much emphasis on the diagnosis, which predicts the treatment, when in fact a diagnosis does not necessarily reflect biological reality but rather a “best guess.” It’s subject to change at any time, as are the fluctuating symptoms that real patients present with. (Will biomarkers help? I’m not holding my breath.)

First of all, the diagnoses we give are not based on a valid understanding of disease. As the current controversy over DSM-5 demonstrates, even experts find it hard to agree on what they’re describing. Maybe if we viewed DSM diagnoses as “suggestions” or “prototypes” rather than concrete diagnoses, we’d be better off. But clinical psychopharmacology does the exact opposite: it puts far too much emphasis on the diagnosis, which predicts the treatment, when in fact a diagnosis does not necessarily reflect biological reality but rather a “best guess.” It’s subject to change at any time, as are the fluctuating symptoms that real patients present with. (Will biomarkers help? I’m not holding my breath.)

Second, our predictions (i.e., the medications we choose for our patients) are always based on assumptions that have never been proven. What do I mean by this? Well, we have “animal models” of depression and theories of errant dopamine pathways in schizophrenia, but for “real world” patients—the patients in our offices—if you truly listen to what they say, the diagnosis is rarely clear. Instead, we try to “make the patients fit the diagnosis” (which becomes easier to do as appointment lengths shorten), and then concoct treatment plans which perfectly fit the biochemical pathways that our textbooks, drug reps, and anointed leaders lay out for us, but which may have absolutely nothing to do with what’s really happening in the bodies and minds of our patients.

Finally, the whole idea of falsifiability is absent in clinical psychopharmacology. If I prescribe an antidepressant or even an anxiolytic or sedative drug to my patient, and he returns two weeks later saying that he “feels much better” (or is “less anxious” or is “sleeping better”), how do I know it was the medication? Unless all other variables are held strictly constant—which is impossible to do even in a well-designed placebo-controlled trial, much less the real world—I can make no assumption about the effect of the drug in my patient’s body.

It gets even more absurd when one listens to a so-called “expert psychopharmacologist,” who uses complicated combinations of 4, 5, or 6 medications at a time to achieve “just the right response,” or who constantly tweaks medication doses to address a specific issue or complaint (e.g., acne, thinning hair, frequent cough, yawning, etc, etc), using sophisticated-sounding pathways or models that have not been proven to play a role in the symptom under consideration. Even if it’s complete guesswork (which it often is), the patient may improve 33% of the time (“Success! My explanation was right!”), get worse 33% of the time (“I didn’t increase the dose quite enough!”), and stay the same 33% of the time (“Are any other symptoms bothering you?”).

It gets even more absurd when one listens to a so-called “expert psychopharmacologist,” who uses complicated combinations of 4, 5, or 6 medications at a time to achieve “just the right response,” or who constantly tweaks medication doses to address a specific issue or complaint (e.g., acne, thinning hair, frequent cough, yawning, etc, etc), using sophisticated-sounding pathways or models that have not been proven to play a role in the symptom under consideration. Even if it’s complete guesswork (which it often is), the patient may improve 33% of the time (“Success! My explanation was right!”), get worse 33% of the time (“I didn’t increase the dose quite enough!”), and stay the same 33% of the time (“Are any other symptoms bothering you?”).

Of course, if you’re paying good money to see an “expert psychopharmacologist,” who has diplomas on her wall and who explains complicated neurochemical pathways to you using big words and colorful pictures of the brain, you’ve already increased your odds of being in the first 33%. And this is the main reason psychopharmacology is acceptable to most patients: not only does our society value the biological explanation, but psychopharmacology is practiced by people who sound so intelligent and … well, rational. Even though the mind is still a relatively impenetrable black box and no two patients are alike in how they experience the world. In other words, psychopharmacology has capitalized on the placebo response (and the ignorance & faith of patients) to its benefit.

Psychopharmacology is not always bad. Sometimes psychotropic medication can work wonders, and often very simple interventions provide patients with the support they need to learn new skills (or, in rare cases, to stay alive). In other words, it is still a worthwhile endeavor, but our expectations and our beliefs unfortunately grow faster than the evidence base to support them.

Psychopharmacology is not always bad. Sometimes psychotropic medication can work wonders, and often very simple interventions provide patients with the support they need to learn new skills (or, in rare cases, to stay alive). In other words, it is still a worthwhile endeavor, but our expectations and our beliefs unfortunately grow faster than the evidence base to support them.

Similarly, “pseudoscience” can give results. It can heal, too: some health-care plans willingly pay for acupuncture, and some patients swear by Ayurvedic medicine or Reiki. And who knows, there might still be a valid scientific basis for the benefits professed by advocates of these practices.

In the end, though, we need to stand back and remind ourselves what we don’t know. Particuarly at a time when clinical psychopharmacology has come to dominate the national psyche—and command a significant portion of the nation’s enormous health care budget—we need to be extra critical and ask for more persuasive evidence of its successes. And we should not bring to the mainstream something that might more legitimately belong in the fringe.

Steve, the folks should be able to take whatever drug they want, provided 1) they are not coerced into doing so, and 2) they are not lied to or defrauded as to the effects and harms associated with the drug.

Wait, you mean patients diagnose themselves and direct prescriptions? The doctor does not take responsibility?

Do you think perhaps consumers might be unduly influenced by drug advertising to ask for drugs that they may not need?

As for complete information about effects and harms, that does not exist. The literature has buried or minimized effects and harms. This is one of the principle corrupting influences of commercial interests — to leave out anything that might cut into sales.

And doctors are hypnotized into ignoring or misidentifying adverse events. When was the last time you reported an adverse event from a psychiatric drug to the FDA?

My dear Iatrogenia, individuals are influenced by influential people out to make money everywhere all the time: look no further than the porn industry.

Regarding effects and harms: I hope my clients (parents) don’t expect me to know everything there is to know about the remedies I prescribe. Perfect knowledge is unattainable. The plea to my colleagues is to stop lying to their clients regarding what IS KNOWN about mental illness and its treatment.

Regarding prescriptions and doctor involvement: if you expand your historical lens a little wider, you will observe that many substances now called “dangerous drugs” were only last century available over-the-counter. I believe that we own our bodies and that the State ought to have as little to say as possible regarding what we (ADULTS) put in those bodies. To the extent that I “take responsibility”, I am acting as an agent of the State, not of the client. It’s important that people understand this.

I have seriously wonderred at times if some of my psychiatrists thru the years could even read a graph when I showed up with one in my office visits.

Here is my take on it: clinical psychopharmacology has been corrupted.

http://hcrenewal.blogspot.com/2009/04/in-defense-of-psychiatric-diagnoses-and.html

From my perspective, psychopharmacology is driven by faith, and the exotic recipes shared by experts are as the mumblings of witch doctors, sagely nodding to each other as they expound upon their pet formulations.

The difference being the powerful drugs applied to the human brain by psychopharmacology do so much more harm than the remedies dispensed by witch doctors, who’ve learned by trial and error over the generations not to hurt their patients and create bad word of mouth. Bad for business, you know?

Like many religions, psychopharmacology is supported by an extensive literature, rich in self-reference and tautology, rationalizing its practices; rich and respected high priests; and a devoted following uncritically repeating its wisdom everywhere.

It must be a very uncomfortable feeling to fall out with the true faith. I feel for independently minded psychiatrists. You really need your own organization. How about joining the American Psychological Association and forming a psychiatry wing? Don’t forget to sign their open letter about the DSM-5 at http://www.ipetitions.com/petition/dsm5/

Thanks for the link. I signed the petition

Me too.

Steve, It seems within the content of your post you have given the answer – “we need to be extra critical and ask for more persuasive evidence of its successes.” The dx, signs, symptoms, self reporting of patients, are seemingly more subjective, on the part of both patient and doc. Is it possible, given the vagaries of the mind, DMS and practitioners, to have objective evidence based data?

Nicely said. I’m a great fan and a somewhat disillusioned trainee trying to figure out what’s my best bet in the long run. Awesome blog!

Madams/Sirs,

Please read the “Response to the American Psychiatric Association: DSM-5 development” by British Psychological Society in pdf documents, as linked here: http://apps.bps.org.uk/_publicationfiles/consultation-responses/DSM-5%202011%20-%20BPS%20response.pdf

Best Regards

AB

Rob Lindemann: Please forgive me if I didn’t seem to understand you. Thank you for signing the open letter to DSM-5. I agree medicine would be much advanced if your colleagues stopped lying about what is known about mental illness and its treatment.

My perspective on the lack of “perfect knowledge” regarding psychiatric drugs is this: The last 20 years of scientific literature regarding the efficacy of these drugs is hopelessly contaminated by commercial interests and a fantasy of biological validity for psychiatry.

Primary research that is garbage gets recycled into Cochrane, meta-analyses, and survey articles. It lives on forever in reference spirals. None of the tools clinicians rely to stay up-to-date is worth anything — garbage goes in, garbage comes out.

Much of what clinicians think they know about utilizing psychiatric drugs is based on this garbage, including diagnosis, prescription, dosage, and drug combinations. The information that filters to the pediatrics journals isn’t any better.

The rest of medicine may lack “perfect knowledge” about treatments, and it is also suffering from commercial incursion. But the corruption of knowledge about psychiatric drugs is nearly total.

Personally, I believe the studies that have negative findings for psychiatric drugs are more credible. They, at least, are probably less affected by commercial interests.

Here’s an interesting study about the rhetoric of denial regarding psychiatric drug adverse effects: http://www.ncbi.nlm.nih.gov/pubmed/19342139

I surely wish doctors would stop responding to psychiatric drug complaints with: “All drugs have side effects”; “Don’t throw the baby out with the bathwater”; “There is no perfect knowledge”; and my personal favorites, “The benefits outweigh the risks” and “that side effect is very rare” — when the risks are so drastically under-recognized.

Psychiatry is a pseudoscience. And that’s because people are complex, or “pseudoscientific.” That doesn’t mean we don’t help people. But it does mean we should be wary of exaggerated claims as to how and how much we help people. Big Pharma and its pseudoscientific psychiatrists (and psychologists) don’t get it. And they never will.

Steve,

Nice post.

But here’s a thought: we do no better at proving that psychotherapy is effective. So what are we left with? Should we close up shop and go home? Should we tell people it’s hopeless?

Dinah

There you go again, Dinah!

For you the alternative is always “what? We should pack it in and go home” it’s a false dichotomy.

Pschotherapy may not work for everyone, but at least it is not sold as some!hing it’s not (see ‘chemical imbalance theory’)

Ha! I agree with this post in its entirety. I am an early-career psychiatrist who’s quickly become disillusioned with my chosen profession (so much so that I consider doing another residency). However, I disagree that psychotherapy is much better than the hot mess that is “psychopharmacology.” The theoretical underpinnings of dynamic psychotherapy are more absurd than all this serotonin/nor-epinephrine/dopamine talk. Perhaps all we should do is CBT, as it seems to be the only thing we do that has any decent evidence behind it.

Dinah,

We may not be able to prove that psychotherapy is effective, but the one thing it does have going for it is, for lack of a better phrase, face validity.

I know that’s not the proper use of the term, but let’s face it: the strategies, tips, and skills one learns from therapy usually correlate closely with the symptoms and problems one is trying to overcome. On the other hand, there’s quite a gulf between the ingestion of a chemical compound and the ability to overcome those same obstacles.

One just feels right, the other is a leap of faith.

With psychopharmacology such mumbo-jumbo and the benefit vs risk proposition so questionable, I simply do not get the argument “we must prescribe some kind of medication or we can’t help patients.”

But I sure do understand “If I didn’t prescribe psychiatric drugs, I wouldn’t have a business!!!”

ANOTHER dirty little secret of Psychiatry revealed! This is turning out to be the day for revelations!

No, Dinah.

You should pack up and go home, because things are never hopeless.

And the last thing someone needs when they are in deep suffering is a conventional psychiatrist such as yourself, who tells them they have lifelong, incurable brain disorder; and drugs them into oblivion, leaving them void of the one thing they need the most – hope.

Pharmacological psychiatry offers no hope.

Pack it up.

Go home.

Duane

P.S.: I put up what I thought was my last comment several months ago… Changed my mind, left another one. Sue me.

Pharmacological psychiatry is especially useless (and harmful) for people who are diagnosed with “schizophrenia”…

From Peter Breggin, M.D. –

“The last person you want to drug, or to tell they have a biological disorder is a 17 year-old girl who doesn’t know if she’s the Virgin Mary… That person needs such gentleness and patience and understanding and relationship. That human being really needs to be cared for in everything that word means.”What we do instead is to brutalize the most vulnerable people.”

His comments come 6:20 into this clip –

http://www.youtube.com/user/dmackler58#p/u/13/f6HGS0O9yEg

IMO, this clip, particularly the words of Dr. Breggin should be required viewing for all medical students specializing in psychiatry; along with those who are in practice.

Conventional psychiatry has it all backwards!

Duane

Duane, I sometimes think you would do better to use quotes, clicks, utube, etc if they came from someone who agrees with your beliefs but is not known for his anti drug stance – pretty much from the get-go. (I remember reading his books in college – in the 70’s, and his screeds against meds and psychiatry.)

Carol,

Don’t like Dr. Peter Breggin?

How about Gary Kohls, M.D. (one of many others)…

Psychotropic Drugs: The Hidden Dangers –

If it’s the TRUTH that bothers you (not so much Breggin per say), there’s not much I can do to help… Other than encourage you to face the facts… face the truth… no matter how painful it is.

It has a way of setting us free, Carol.

Duane

Dinah, psychotherapy is helpful if for only reason, it gives the person contact with aother who (hopefully) wants to help, someone to whom they can confide without fear of their secrets being exposed, and someone whose attention is fully focused, at least for 45 – 50 minutes, on them and them alone. There is no substitute for that. (That being said I do not know that you need a Ph.D, M.D., even MSW to do that.)

“I do not know that you need a Ph.D, M.D., even MSW to do that”

There in a nut-shell, is one of psychiatry’s dirty little secrets.

In fact, peer support has been found to be effective, too.

What people in distress need is sympathetic company. I can see how a mental health professional might offer more of a feeling of safety and security for some people.

I can also say from personal experience some PhD therapists are jerks that can make people feel worse. But at least they’re not practicing invasive brain surgery with chemical tools.

And psychology and social work. (I have a BA in psych-worthless but I became a licensed hypnotherapist in a 6 month weekend course, which included practicums. I didnt believe in hypnosis at first. Now I do, but I think part of the benefit is to the client when I spend an hour or 2 doing intake: listening, listening, and listening more.)

Kevin Nasky — Hot mess!! Very good.

One private practice growth area for new psychiatrists is untangling the polypharmacy many patients suffer, minimizing their drug burdens, identifying underlying medical causes (such as B12 deficiency), and helping them taper safely off their psychiatric cocktails.

Then there’s helping them recover from iatrogenic damage and undiagnosing whatever concocted diagnoses they come in with.

You’d be surprised how much demand there is for this.

Dr. Balt,

1 Boring Old Man has a great post on this subject –

http://1boringoldman.com/index.php/2011/11/13/comment-on-a-comment/

Duane

It is uplifting reading discussions that ought to be taking place.

If one takes into consideration 2 pieces.

1. patients put a great deal of trust in doctors.

2. psychiatrists either are pretending to know what they are doing, or aren’t reading enough to find out what is already known.

Which isn’t very worthy of trust.

Considering the mass prescribing of anti-psychotics that completely lack scientific support coupled with ample press of harmful effects.

Is it pretending, or ignorance?

My family suffered horribly, for over a decade until we found the best doctor—the internet.

I work at a University, I have online access to all the journals. Do the practicing docs have this access?

The author of Thought Broadcast is a thoroughly trained neurologist. Does every psychaitrist carry a map of the brain around in his head?

Yeah, that a little self referential joke.

[…] There’s still a lot we don’t know about mental illness, and much of what we do might legitimately be called “pseudoscience.” However, I am also keenly aware of one uncomfortable fact: For every patient who argues that […]

[…] correctly, I’m left to ask a question I never thought I’d ask: is psychopharmacology just one big charade? […]

I visited various websites but the audio quality

for audio songs present at this websitee is actually excellent.

[…] Probably not. So if I assume that I’m not a complete nitwit, and that I’m using my tools correctly, I’m left to ask a question I never thought I’d ask: Is psychopharmacology just one big charade? […]

[…] Read the rest of it here. […]

It’s profitable blood letting. I think history will look back and laugh that we ever believed this stuff.

query:Best%20Chiropractor

Is Clinical Psychopharmacology a Pseudoscience? | Thought Broadcast

If your artleics are always this helpful, “I’ll be back.”

I tried taking a look at your website on my new iphone 4 and the structure does not seem to be correct. Might wanna check it out on WAP as well as it seems most cellphone layouts are not really working with your site.

Mobile Marketing

Is Clinical Psychopharmacology a Pseudoscience? | Thought Broadcast

100% agree with this. No medicine ever works. It makes thing even worse. Better learn to cope with emotions and self sufficient than becoming dependent on medicine.