Which medical professional is best equipped to diagnose and treat depression? Speaking as a psychiatrist, I am (of course) inclined to respond that only those with a sophisticated understanding of psychological assessment, DSM-IV criteria, and differential diagnosis, can accurately diagnose depression in their patients. In fact, I’ve written about how the overreliance on checklists and rapid interviewing (which may happen more frequently in primary-care than in mental health settings) may inflate rates of depression, mislead patients, and increase health care costs.

Which medical professional is best equipped to diagnose and treat depression? Speaking as a psychiatrist, I am (of course) inclined to respond that only those with a sophisticated understanding of psychological assessment, DSM-IV criteria, and differential diagnosis, can accurately diagnose depression in their patients. In fact, I’ve written about how the overreliance on checklists and rapid interviewing (which may happen more frequently in primary-care than in mental health settings) may inflate rates of depression, mislead patients, and increase health care costs.

Maybe it’s time for me to reconsider that notion. In a fascinating article published this month in the journal Family Practice, authors from the Munich Technical University reviewed 13 studies surveying 239 primary-care providers about their experience in diagnosing depression. The paper sheds some much-needed light on this topic, on which I have (apparently) been rather misinformed.

The take-home message from this study is one that should have been obvious to me from the start: namely, that family practice doctors, almost by definition, are perfectly positioned to recognize depressive symptoms in their patients, simply by virtue of their long-term knowledge of their patients’ history and “baseline functioning.” As the authors write: “A relationship that has developed over the years [helped to] reveal symptoms of depression… When clinicians were not familiar with the patient, they acknowledged that the patient was less likely to share personal information, making diagnosis more difficult.” In other words, how a patient’s symptoms fit into the “big picture” of his or her life is far more informative than the “snapshot” offered by a single point in time.

Similarly, I was encouraged by the fact that most family practitioners (“FPs”) don’t rely on checklists or lists of symptoms—or even the DSM-IV—to make their diagnoses. In fact, the authors write that FPs, rightly, “express doubts about the validity of the diagnostic concept of depressive disorders” and that they “consider depressive disorders to be syndromes … where etiological and contextual thinking are more relevant than symptom counts.” They see depression “as a problem they are faced with in their everyday work rather than as an objective diagnostic category.” Finally, FPs typically rely on a “rule-out” strategy using a “wait and see [or] watchful waiting” approach, rather than a need to ask about a list of specific symptoms.

Similarly, I was encouraged by the fact that most family practitioners (“FPs”) don’t rely on checklists or lists of symptoms—or even the DSM-IV—to make their diagnoses. In fact, the authors write that FPs, rightly, “express doubts about the validity of the diagnostic concept of depressive disorders” and that they “consider depressive disorders to be syndromes … where etiological and contextual thinking are more relevant than symptom counts.” They see depression “as a problem they are faced with in their everyday work rather than as an objective diagnostic category.” Finally, FPs typically rely on a “rule-out” strategy using a “wait and see [or] watchful waiting” approach, rather than a need to ask about a list of specific symptoms.

Wow! Here’s a challenge: spend an hour or two reading the DSM-IV (or any of the dozens of papers on STAR*D, or any clinical trial of a new antidepressant), then read the above statements. Which strategy most accurately captures the phenomenology, the reality, the experience, of a patient with depression? Yeah, I thought so, too.

It seems to me that, if we are to trust these authors and their conclusions, then family practice docs really do understand their patients, and can recognize the subtle emergence of depressive symptoms over time, more so than the specialists (like me) who might be asked for an opinion long after the disease has taken hold.

For one thing, the depiction of the FP as “being aware of their patients’ everyday lives” might have been true for the Marcus Welbys of the 60s and 70s, but (unfortunately) not for the HMO drones, the docs-in-a-box, or the clinic rotators of today, who are saddled with more bureaucratic paperwork—or, excuse me, EMR work—than anything resembling patient care. Family practice docs (at least in the US) are more likely to be 9-to-5 employees than true advocates for the patients on their roster.

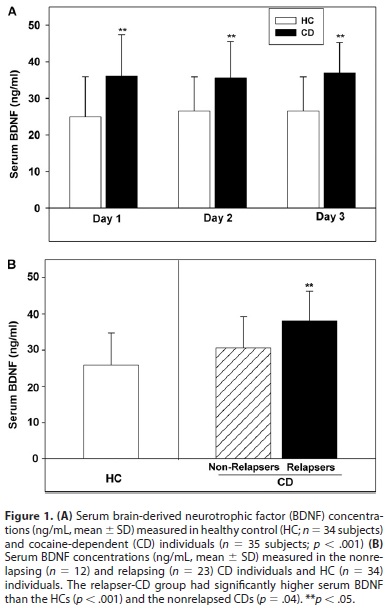

Secondly, FPs may not be diagnosing the same “depression” that my colleagues and I see in the psychiatric setting. The article states that FPs see depression “mainly as a reaction to emotionally draining circumstances such as other illnesses, situation at work or social factors,” and that “only a minority of FPs saw depression as … a biochemical imbalance.” People can indeed become “depressed” after breaking up with a girlfriend, losing a job, or being diagnosed with cancer. But is this a “chemical imbalance” that we should treat with Prozac and Abilify? Eh, maybe not.

In a similar vein, the authors admit that “primary care patients with depressive disorders tend to be less severely depressed [and] experience a milder course of illness.” As it turns out, those are precisely the patients who tend not to respond to antidepressant therapy. Furthermore, the authors write that these patients have “more complaints of fatigue and somatic symptoms, and are more likely to have accompanying physical complaints.” This seems like exactly what one might expect: patients with fatigue, exhaustion, or any somatic complaint (nausea, diarrhea, constipation, headache, sexual dysfunction, acid reflux, congestion, abdominal tenderness, morning stiffness, sciatica, you name it…) are the most likely to say that they feel generally pretty crappy. Or, to use the authors’ words—and the psychiatric vernacular—”less severely depressed.”

So family practice docs—those on the “front lines”—may be the most qualified to diagnose depression (or any mental illness, for that matter) because of their experience and knowledge of their patients. But this is only true if they actually KNOW their patients (which is less likely in this age of

So family practice docs—those on the “front lines”—may be the most qualified to diagnose depression (or any mental illness, for that matter) because of their experience and knowledge of their patients. But this is only true if they actually KNOW their patients (which is less likely in this age of mangled managed care), and if these same doctors recognize the difference between clinical depression and environmental triggers that, for lack of a better word, just plain suck.

Given the above caveats, can (or should) depression be diagnosed and treated in the family practice setting? Perhaps “depression” can, while “Depression” may still be within the realm of the psychiatric professional. Until we determine the best way to distinguish between the two, let’s make sure we attend to patients’ symptoms in context: in the context of their long-term history, environmental triggers, and life events. These may be precisely the situations in which the family practitioner knows best.

[If any reader would like a PDF copy of the article referenced here, I’d be happy to send it to you. Please email me.]

Posted by stevebMD

Posted by stevebMD